Use AI, Don't Let AI Use You.

Brainlessly using AI to solve your problems will eventually make you a worse engineer.

We are now at month 17 since ChatGPT was released to the public, and what a whirlwind it has been ever since. Venture capitalist money is being dumped into GPT wrappers, Nvidia’s stock is up 1000%, my Grandma keeps asking if I’m going to lose my job once a month, and whatever else comes out tomorrow.

Not so fast Grandma…

I am not entirely sure how AI is used in other fields (maybe to write nicer emails), but I do know that I use it in one way, shape, or form every day. Some of the following use cases are:

Escaping YAML indentation hell.

Syntax verification/cleanup.

Speeding up small, manual tasks.

Debugging.

Explaining new code concepts in plain language.

Essentially, my workflow has gone from:

Working → Question → Google.

Working → Question → AI hallucination → Google.

With AI now being part of my workflow there are some best practices I follow to make sure I am optimizing my use of AI while also continuing to grow in my career:

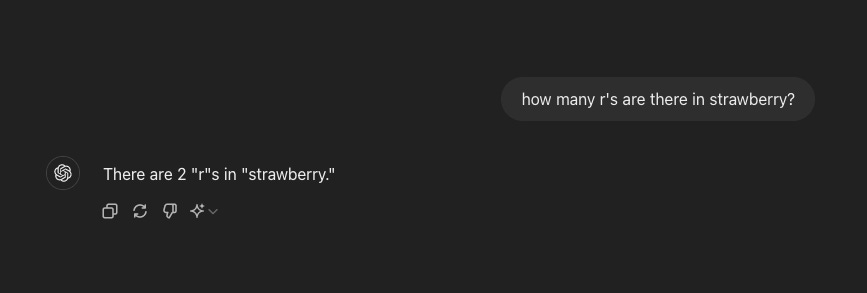

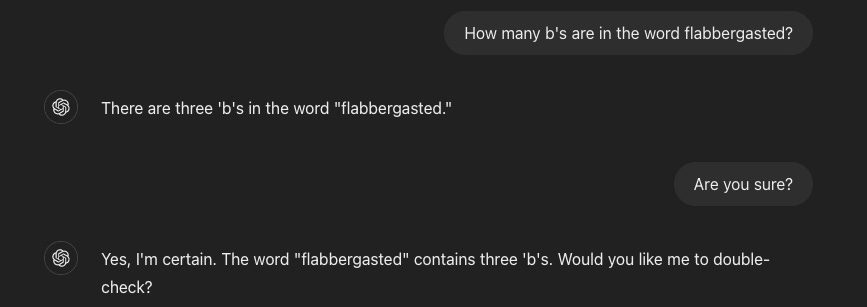

AI is incorrect, a lot.

Have you ever asked ChatGPT to do something in Ansible? Then you’ve probably seen it make up a task that is documented in no library anywhere ever. Are you working with the Azure SDK in Python? Good luck trying to find answers once it becomes more complex. ChapGPT is not the only AI you say? Have you ever even tried Bing Copilot?

I say all of that to say, when you are trying to do anything past beginner-level, don’t look to AI as your savior. You will end up with hallucinated code. LLMs are made to sound correct, not be correct. AI is now the leading disinformation vector in the world. If you are using AI for facts, you are simply using it wrong. It is just another resource, like Google.

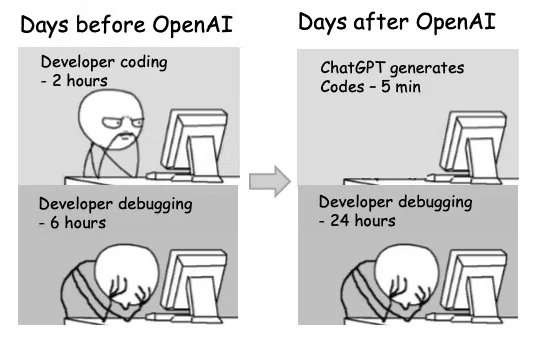

Learning is beneficial.

I, and many others are more efficient with work today because of AI. Companies endorsing AI to their workforce can ship faster as a result of it. With that comes the possibility of codependence. Brainlessly feeding AI all of your errors, challenges, questions, etc., and failing to understand how to effectively solve problems will ultimately burn you as an engineer in the long run.

I like to learn new things, I love to solve complex problems. I’ve spent the last three days (and the next week or so) trying to write a service bus for no good reason except for the sake of learning. I could hand ChatGPT the entire project and worry about debugging its hallucinations, or I could write it myself, go to AI when “needed” and as a result be better at Golang. Maybe the finished product is what you’re worried about, which is understandable, but good luck using ChatGPT in your next technical interview.

Don’t be a product.

“If something is free, you are probably the product.” Ever looked at uBlock Origin while **NAME FREE INTERNET SERVICE** is open? Do you think that “free-tier” of yours is really free?

This holds true for AI. There are plenty of AI coding assistants that are “free”, have free tiers, or are free for students. While students code their way through college, typing and waiting for their assistant to provide them with an answer, better models are trained on that same code, while also introducing us to the codependence I spoke of. Eventually, we go into the workforce needing a Copilot to complete our jobs. While companies eat the cost of API calls today, they profit in the long run off of half-baked engineers.

Security, or lack thereof.

There are unprecedented security implications of you and your entire team “CTRL + V-ing" your API keys, passwords, and secrets into an LLM. Yes, the AI needs context, but please take a few seconds to sanitize your queries. We do not know the depth of this problem today, but I don’t see any upside in shipping secrets to someone else’s black box.

TL;DR

All in all, AI is very useful and can be used to increase productivity and efficiency across organizations everywhere. Though this may be true, there are many downsides to adopting AI into your workflow without the proper due diligence. This includes disinformation, slighted growth, commodification of users, and security risk(s). Users should adopt practices that both take advantage of the benefits of AI and LLMs while also endorsing practices that benefit their learning, career growth, and lack of dependence on AI.