Kubernetes the not so hard way?

Bootstrapping a highly available Kubernetes cluster at home.

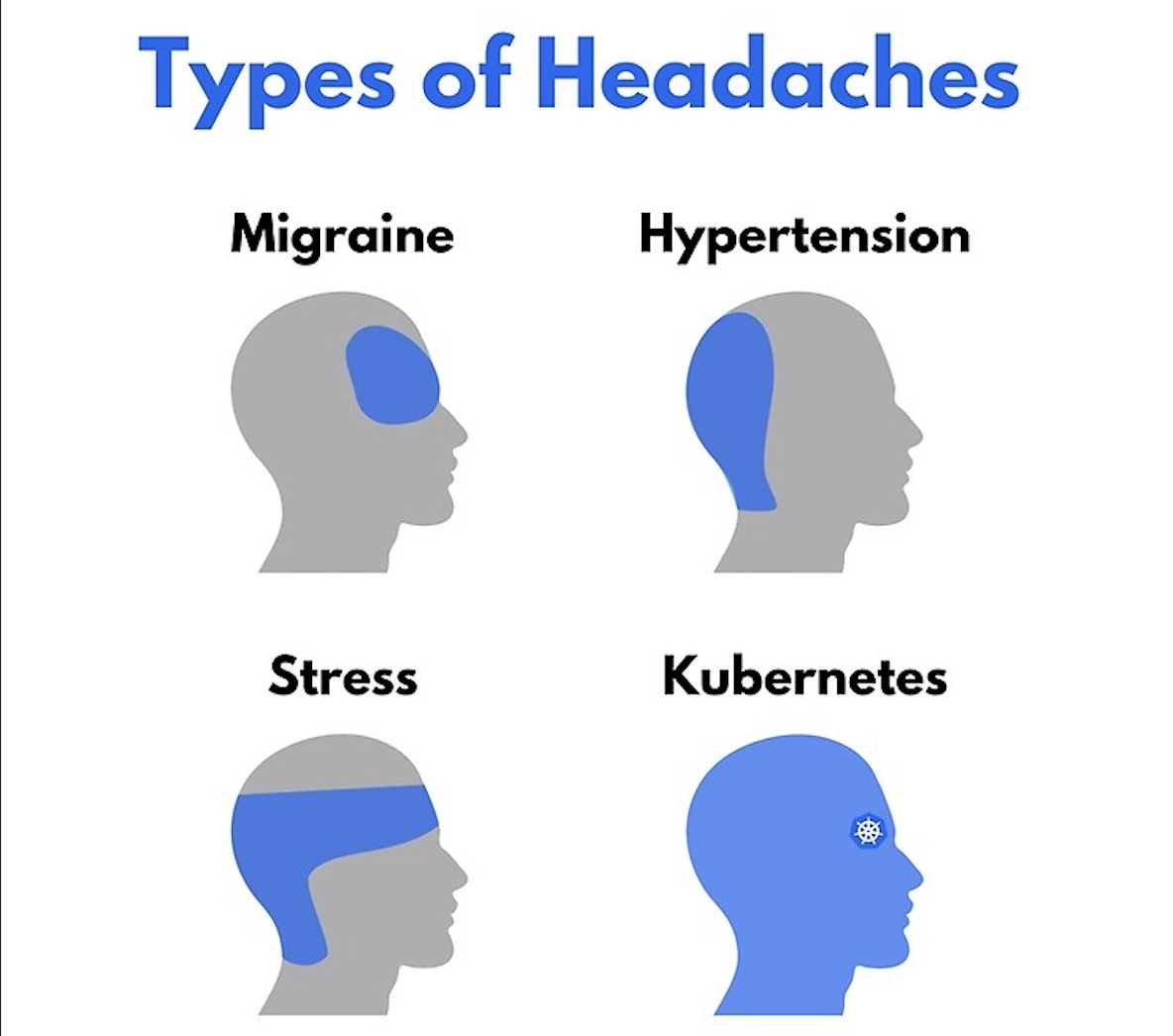

Kubernetes (k8s) is not easy. In fact, it is one of the most complex pieces of software there is. That’s why there are plenty of services to abstract you from the pain and complexity of managing Kubernetes (AKS, EKS, GKE, ACA, acronym hell, etc). Learning Kubernetes is also not easy, and again many services will assist you in bootstrapping a k8s without the complexity of going from a few VMs to a full functional cluster (miniKube, Kind, etc). There are also lightweight versions of k8s that don’t have all the features of vanilla k8s (k3s, k0s, k9999999s).

There are many tutorials to help you build a Kubernetes cluster from the ground up (I’m talking about compiling your hypervisor ground up):

I say all of that to say, despite the complexity I am going to show you how to bootstrap a fully functional, highly available vanilla Kubernetes cluster in a few different parts. Get ready to rumble…

Prerequisites

To complete this you’ll need:

Compute (meaning a hypervisor, many Raspberry PIs, a bunch of NUCs, etc).

A network you have administrative access to.

No life.

What is Talos Linux?

For the multipart blog series, I’ll be using Talos Linux. A stripped-down OS that is essentially just Kubernetes.

Talos is a container optimized Linux distro; a reimagining of Linux for distributed systems such as Kubernetes. Designed to be as minimal as possible while still maintaining practicality.

Talos is just Kubernetes, with only 12 binaries, no SSH access to the nodes, and nodes can only be managed via talosctl. In a few minutes, you can go from nothing to a high-availability cluster. It is a great project for running Kubernetes on bare metal for those who want to learn, or even those who plan to run it in production on-prem.

Series Links

Anyways, after the struggle of getting this cluster to a baseline of something I am somewhat proud of, here is a 4 part series of “Kubernetes the not so hard way?”, welcome back to the Hyperbolic Chamber…

Conclusion

From nights up until 3 AM, and restoring etcd from backup twice, to bricking the kubelet in all nodes in the cluster, this has been a good experience documenting standing up k8s on bare metal. I plan to write additional posts on some of the struggles, production issues, and upgrades I experience down the road. For now, I’ll go back to the thought pieces that people will actually read…